Two recent papers from the Computational and Mathematical Neuroscience group at CRM ask what makes neural circuits drift in the first place, and what keeps them from collapsing under their own learning rules. One, published in PNAS, traces representational drift in…

Two recent papers from the Computational and Mathematical Neuroscience group at CRM ask what makes neural circuits drift in the first place, and what keeps them from collapsing under their own learning rules. One, published in PNAS, traces representational drift in the mouse auditory cortex to an ongoing balance between learning and stochastic synaptic change. The other, in the Journal of Computational Neuroscience, shows that locally balanced inhibition keeps feedforward networks from collapsing into rigid, input-insensitive states.

You’re about to leave the house when you notice the first drops on the window. The rain registers, you reach for the umbrella by the door, and you step out into the morning, hoping to stay more or less dry. An input has come in, an output has followed, and that’s roughly what a memory system is for. Most of the time, the whole cycle runs without you noticing.

But memory can fail in different ways. The system might stop listening, so you grab an umbrella every time you leave the house, whether it rains or not. Or nothing new registers, every fresh piece of information overwriting what came before, and by the time you reach the door, you’ve forgotten where you were going.

Two recent papers from the Computational and Mathematical Neuroscience group at the CRM examine how the brain lies between rigidity and chaos. One, by Jens-Bastian Eppler in collaboration with Thomas Lai, Dominik Aschauer, Simon Rumpel and Matthias Kaschube, was recently published in PNAS. The other, by Gloria Cecchini and Alex Roxin, has just appeared in the Journal of Computational Neuroscience. They approach the same problem from opposite ends, drift on one side, freezing on the other.

A system that is always in motion

If you record the neurons in the auditory cortex of a mouse hearing the same sound day after day, they don’t keep responding the same way. Their responses drift. The set of cells active on Monday is not the set of cells active on Friday. The animal still hears the sound, behaviour stays roughly the same, but the underlying code keeps shifting.

Eppler et al.: Two-photon image of mouse auditory cortex. Red marks all neurons, green marks their activity. Together, the two channels allow the same cells to be tracked across imaging sessions.

This is called representational drift, and for a long time, it was invisible. The technology was the bottleneck. “Before two-photon imaging, you only had snapshots,” Eppler explains. “You could record a few neurons at once, but only at a single moment in time. And because nothing obvious seemed to change in the absence of explicit learning on the level of behaviour, the working assumption was that the brain was largely stable at rest.” Once researchers could track individual neurons over weeks, that assumption no longer held. There was no such thing as a static brain.

The puzzle goes deeper than the technology. Long-term changes in neurons were thought to pair with long-term changes in behaviour: an animal learns, the circuit reorganises, and a new behaviour appears. Drift isn’t like that. The responses reorganise, but the behaviour stays put. As Roxin puts it, “representational drift is an example of long-term changes in neuronal activity even when the behaviour is fixed and stable. That is still a mystery.”

“Drift isn’t separate from learning, it is learning. The catch is that any system of finite size can’t keep accumulating new information indefinitely. At some point, something has to give way, and older representations get overwritten.”

Bastian Eppler

Eppler had to choose where to look, and the mouse auditory cortex was a practical choice. “In mice, the visual system is comparatively underdeveloped, but the auditory cortex is proportionally larger than in humans. Hearing is very important for them,” he says. “There’s also a real experimental control advantage. You can define and deliver sounds with complete precision. In vision, a mouse might look away, and you have to account for that. In audition, if you play a sound, the animal hears it.” Drift had already been documented there, which made it a natural place to look.

To understand why drift happens, Eppler turned to two classical tools. Signal correlations capture how closely two neurons respond to a given stimulus. Noise correlations measure how their activity covaries when nothing in the stimulus changes. In a single recording, neither tells you much about cause and effect. Tracked across days, they provided a more complete picture.

They found an asymmetry. Signal correlations on one day predicted noise correlations a few days later. The reverse did not hold. Activity at one moment was leaving a trace in the wiring later on. The wiring wasn’t reaching back to rewrite the activity that produced it. “Honestly, our initial intuition was the opposite,” Eppler says. “We expected that if anything were stabilising the system, it would be the network, that the underlying connectivity would act as an anchor and constrain the activity patterns over time.”

The asymmetry turned out to be a direct consequence of Hebb’s 1949 rule. Neurons that fire together, wire together. Activity shapes the connectivity that follows it; the connectivity can’t reach back. The result was surprising in the moment, then obvious in hindsight.

The model combines two ingredients, a stochastic process that keeps the network drifting, and Hebbian learning that reins it in. Neither alone reproduces the data. Both together do. The result is a circuit that keeps reorganising without falling apart. In this picture, drift and learning are the same process viewed at different timescales.

“Drift isn’t separate from learning,” Eppler says. “It is learning. The catch is that any system of finite size can’t keep accumulating new information indefinitely. At some point, something has to give way, and older representations get overwritten, which is actually a feature, not a bug. You really want to remember the last meal you had, in case you get sick. You probably don’t need to remember exactly what you had for lunch two Tuesdays ago. Forgetting is the system making room for what matters now.”

When the network won’t budge

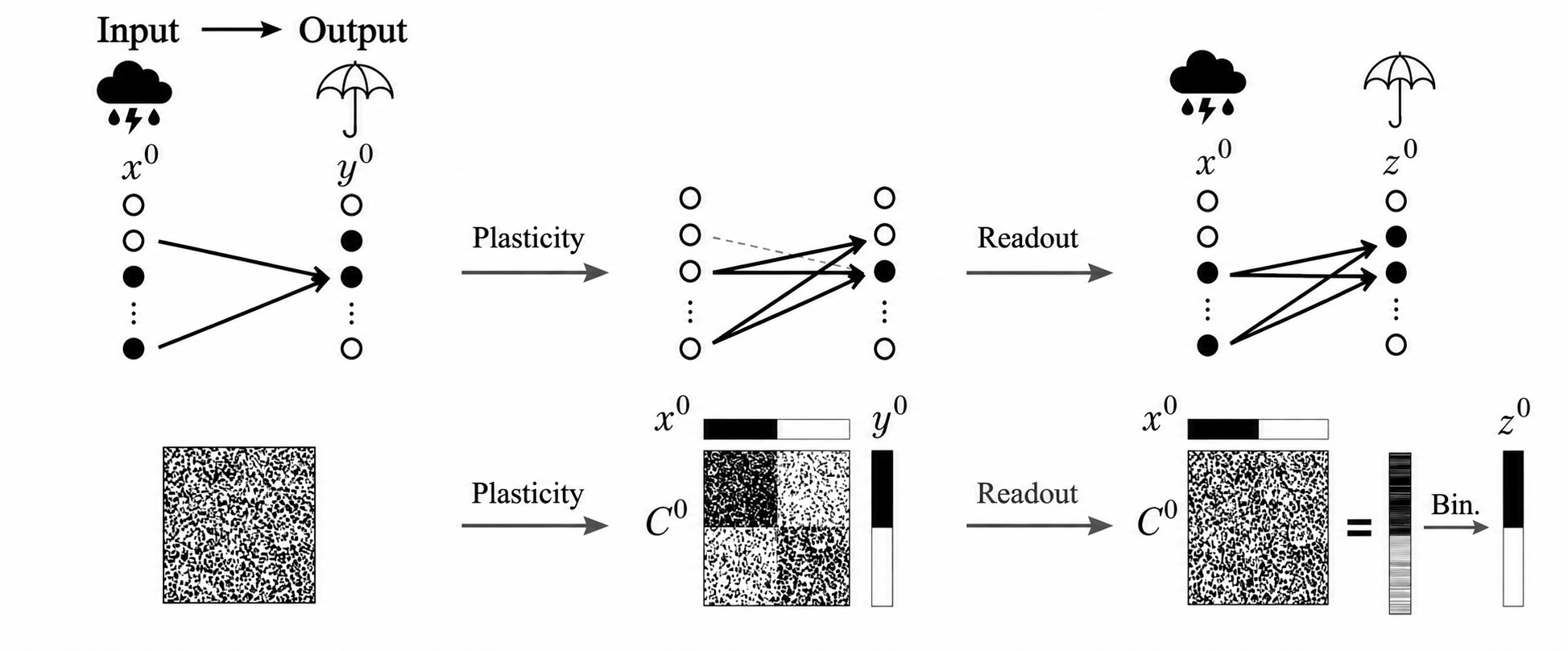

Cecchini and Roxin apply the same kind of Hebbian rule in a different setup. In classical models, including their group’s earlier work (Devalle et al., 2025), the network is told what its outputs should be. In the updated model, the output emerges from the inputs themselves, the way a real neuron is driven by the cells projecting to it. Hippocampal CA1 neurons, for instance, are driven by inputs from CA3 and other regions.

“Inhibition may help neural circuits maintain the balance between stability and adaptability.”

Gloria Cecchini

What Cecchini and Roxin find is that the network breaks. A positive feedback loop takes over: neurons with more connections fire more frequently, leading Hebbian learning to strengthen those links further. The active cells eventually dominate while the rest fall silent. Within a few learning steps, the system begins producing the same output regardless of the input.

This is the umbrella problem from before, in more formal terms.

“A frozen network would not learn other associations,” Cecchini explains. “It would give the same response to any input, which means that whatever the weather is like, you would still take an umbrella.”

Cecchini-Roxin: Schematic of associative learning in a feedforward network. An input pattern (cloud) is linked to a target output (umbrella) by reshaping synaptic connections.

The paper proposes a fix. If each neuron also receives an inhibitory input that scales with the strength of its own incoming excitatory connections, what the authors call locally balanced inhibition, the runaway dynamics disappear. The network keeps responding differently to different inputs. The amount of inhibition has a clean mathematical relationship to the network’s coding fraction. When inhibition matches the fraction of active cells, flexibility is fully restored.

The freezing problem came first, the fix came after. Starting from this biologically motivated version of Hebbian learning, the pathology showed up almost immediately. Locally balanced inhibition then suggested itself, with a substantial existing literature on excitation-inhibition balance to draw on.

But the inhibition didn’t just prevent the failure, it also improved performance. Memory traces decayed more slowly. The network could store more associations than the classical version. “The result was not just a correction of a failure mode, but a genuine performance boost,” Cecchini says.

There’s a caveat. With a feedforward Hebbian network, forgetting cannot be eliminated. Locally balanced inhibition postpones it, but it doesn’t remove it. Memories still fade eventually; what changes is how long they stay strong before they do.

Forgetting is still there. It just runs slowly enough to be useful.

Read side by side

Both papers come out of the same lab, where Cecchini and Eppler both work with Alex Roxin on the same broad question, why neural activity keeps changing under stable behaviour. They approach it with different tools, Cecchini builds mathematical models of feedforward learning, while Eppler analyses chronic recordings from awake mice. The two papers also belong to a wider conversation. The Computational and Mathematical Neuroscience research group is active, hosting weekly group meetings and contributing to scientific meetings and conferences across Barcelona’s broader computational neuroscience community. Both papers carry traces of that exchange.

Read together, they reach the same general diagnosis. Hebbian learning on its own doesn’t quite work. It needs a counterweight. In Eppler and Lai’s model, the counterweight is stochastic synaptic noise. The drift their model reproduces is what happens when Hebbian plasticity balances a steady churn of random change. Remove either ingredient, and the data stops fitting.

For Cecchini and Roxin, the counterweight comes from inhibition. Without it, Hebbian dynamics in their input-driven setup lead to a runaway feedback loop, and the network freezes. With it, the same rule keeps the network responsive across many inputs.

“Behaviour changes over the lifetime of an organism. Understanding these slow, long-term changes requires access to the neuronal mechanisms underlying them.”

Alex Roxin

Both papers also argue about what a computational model is for. “A model’s main value isn’t to reproduce the data,” Eppler says. “Plenty of models can do that. The real value is mechanistic insight.” His paper isn’t trying to fit drift. It’s asking what kind of plasticity rule produces drift, and the answer is concrete: neither random change alone nor Hebbian learning alone can do it. You need both. That conclusion isn’t visible in the data on its own.

Roxin makes a related point. He cites the Hopfield network, the foundational model of associative memory from 1982. It has never been fitted to experimental data, and it couldn’t be. “Obtaining a good fit to data says nothing about the usefulness of a model for understanding anything,” he says. What it provides is a clear mechanism, which is what gives it its longevity.

The work continues. Eppler has turned to a follow-up puzzle: if the responses drift, why does perception stay intact? We still recognise our favourite songs from childhood, decades after we first heard them, even though by now the underlying neural code has rewritten itself many times over. His current hypothesis is about random projections. Random networks have a surprising feature: they preserve the topology of their inputs in their outputs. Which stimuli are similar to which gets preserved even when the patterns encoding them shift? The structure stays put while the code moves around.

Cecchini and Roxin continue to work on plasticity rules in feedforward networks and on representational drift. Roxin is also widening his interests. “I am very interested in understanding principles of generalised intelligence, as opposed to neuroscience,” he says. “I think this is where the future lies.”

References

Cecchini, G., Roxin, A. (2026). Locally balanced inhibition allows for robust learning of input-output associations in feedforward networks with Hebbian plasticity. Journal of Computational Neuroscience. https://doi.org/10.1007/s10827-026-00932-x

Eppler, J.B., Lai, T., Aschauer, D., Rumpel, S., Kaschube, M. (2026). Representational drift reflects ongoing balancing of stochastic changes by Hebbian learning. PNAS 123(5). https://doi.org/10.1073/pnas.2503046123

Newsletter

Get the latest CRM news and activities delivered to your inbox

Subscribe now

Recent newsletters →

Com ensopegar amb un teorema

Eigenvalue Day

Jezabel Curbelo receives the 2025 National Research Award for Young Researchers in Mathematics and ICT

Jezabel Curbelo, full professor at the Universitat Politècnica de Catalunya and researcher at the Centre de Recerca Matemàtica, received the 2025 National Research Award for Young Researchers in Mathematics and ICT this Monday at a ceremony presided over by King…

Resultat de la priorització de les sol·licituds dels ajuts Joan Oró per a la contractació de personal investigador predoctoral en formació (FI) 2026

A continuació podeu consultar el resultat de la priorització de les sol·licituds dels ajuts Joan Oró per a la contractació de personal investigador predoctoral en formació (FI 2026). Aquests ajuts s’adrecen a les universitats públiques i privades…

CRM April Newsletter

Eva Miranda Receives the Inaugural Agnes Szanto Medal from the Foundations of Computational Mathematics Society

Eva Miranda (UPC and CRM) has been named the first recipient of the Agnes Szanto Medal, a new mid-career award established by the Foundations of Computational Mathematics (FoCM) society in memory of the mathematician Agnes Szanto. The medal will be presented at the…

Un missatge, una equació diferencial, tres camins

Carolina Benedetti: Lluís Santaló Visiting Fellow 2026

Carolina Benedetti, associate professor at the Universidad de los Andes in Bogotá, spent March at the CRM as a Lluís Santaló Fellow. A specialist in algebraic and geometric combinatorics, she is collaborating with Kolja Knauer (UB/CRM) on questions at the intersection…

Gradient Day

Sant Jordi 2026 al CRM

Per celebrar Sant Jordi hem demanat a la gent del CRM que ens recomani un llibre. Un. El que tingueu al cap ara mateix. Set persones han respost, i entre les set han aconseguit cobrir quatre idiomes, almenys tres segles i cap gènere repetit….

A Semester of Mathematics across Two Continents: Eva Miranda at ETH Zürich, ICBS Beijing, and WAIC Shanghai

In the second half of 2025, Eva Miranda (UPC and CRM) delivered a plenary lecture at the International Congress of Basic Science in Beijing, participated as a panellist at the World Artificial Intelligence Conference in Shanghai, and taught a Nachdiplom Lecture course…

|

|

CRM CommPau Varela

|

The post What memory has to balance: Representational drift, network freezing, and the mechanisms that hold neural circuits in between first appeared on Centre de Recerca Matemàtica.